I have deeply mixed feelings about #ActivityPub's adoption of JSON-LD, as someone who's spent way too long dealing with it while building #Fedify.

-

@evan @kopper @gugurumbe what, you got a book on caching you want to plug too?

-

@evan @gugurumbe i know what caching is, thanks. in fact, my current project is building one that's tailor made for solving the activitypub thundering herd problem (codeberg.org/KittyShopper/middleap)

i've been trying to keep civil through this thread largely because i started the conversation mentioning software i (temporarily) help maintain and therefore represent it even implicitly, but leaving that aside and letting my own personal thoughts enter the picture:

i think this passive aggressive reply is the last straw. thinking that i somehow know enough to write code for this protocol without knowing what a cache is? plugging your book in a network largely developed by poor minorities (i myself have the rough equivalent of less than 40 USD in my bank account total)? this inability to consider change? ("as2 requires compaction", because you're the one defining the spec saying it does), the inability to consider the people and software producing and building upon the data, as opposed to the data itself? the inability to consider the consequences of your specifications and how they're being used in the real world?

i honestly do not know if this line of thought is truly capable of leading this protocol out the slump it's currently in. if you're insistent on shooting yourself in the foot, so be it, but please take the time to consider how this behavior affects other people.

i've largely been burnt out of interacting in socialhub and other official protocol communities due to exactly this behavior, whether from you or others with influence on the final specs, and the only reason i keep trying is because of what's probably a self-destructive autistic hyperfixation on this niche network and trying to make it actually work for me and my friends, as opposed to receiving funding from the well-known genocide enablers at meta and trying to shove failing standards where they don't belong.

please be a better example. if the protocol was actually desirable then sure, you may have earnt it, after all, atproto is teeming with silicon valley e/acc death cult weirdos and yet people seem to prefer it. have you wondered why? or do you prefer to dismiss anything not coming from you without thinking about it@kopper @gugurumbe sorry, friend, for the curt response. I'm flying today for a death in the family, and I'm having a hard time keeping a lot of conversations going. You should have heard me trying to chair a meeting as I went through airport security!

-

@kopper @gugurumbe sorry, friend, for the curt response. I'm flying today for a death in the family, and I'm having a hard time keeping a lot of conversations going. You should have heard me trying to chair a meeting as I went through airport security!

Anyway, to me, a backwards-incompatible change is absolutely the worst possible choice we could make for the Fediverse. It splits the network, possibly permanently. We have about 100 implementations of ActivityPub, and they can't all upgrade at the same time.

-

Anyway, to me, a backwards-incompatible change is absolutely the worst possible choice we could make for the Fediverse. It splits the network, possibly permanently. We have about 100 implementations of ActivityPub, and they can't all upgrade at the same time.

@kopper @gugurumbe I just don't think the downside of having to cache the results of context URL fetches outweighs that.

-

Anyway, to me, a backwards-incompatible change is absolutely the worst possible choice we could make for the Fediverse. It splits the network, possibly permanently. We have about 100 implementations of ActivityPub, and they can't all upgrade at the same time.

@evan @gugurumbe

here is a backwards incompatible change in a fep you authored: codeberg.org/fediverse/fep/src/branch/main/fep/b2b8/fep-b2b8.md#attributedto (specifically, the Link-and-name bit. mobile Firefox does not let me send highlights apparently)

the http signature draft->rfc change is backwards incompatible.

mastodon api to c2s is backwards incompatible for client developers (and, if done correctly, would require long and unwieldy migrations on servers. ask firefish.social users how those kinds of migrations end up)

whatever the replacement for as:summary as content warnings would be backwards incompatible. replacing as:name with as:description for media alt text is backwards incompatible (gotosocial did it, and we adapted)

making webfinger optional is backwards incompatible

backwards compatibility is not here yet. now is the second best time to get rid of legacy cruft -

@cwebber @kopper @hongminhee I talk about this in my book. Unless the receiving user is online at the time the server receives the Announce, it's ridiculous to fetch the content immediately. Receiving servers should pause a random number of minutes and then fetch the content. It avoids the thundering herd problem.

@evan @kopper @hongminhee But that means either:

- Users don't get to see content that has been federated to them for *minutes*

- Unless we show unverified messages, allowing for windows of impersonation attacks, in which substantial reputational damage can be done!And also:

- Whenever I boost several of @vv's posts, her server can be down *for a while*. Random delays can help reduce load but not as substantially as signature verification

- This has to be done for both the activity *and* the object

- And there's no reason to include either the activity or the object if you care about not risking impersonation attacks, because you might as well just send {"@id": "https://example.org/post/12345/"} -

@patmikemid I call it trust, then verify. Usually caching the data with a ttl of a short number of minutes is enough.

@evan @patmikemid @kopper @hongminhee Trust *then* verify?! That means accepting windows of impersonation attacks necessarily then, right...?!

-

@gugurumbe @cwebber @kopper @hongminhee AS2 requires compacted JSON-LD.

@evan @gugurumbe @cwebber @kopper @hongminhee only for terms defined in AS2, though?

if the activitystreams context is missing in an application/activity+json document, then you MUST assume/inject it. this means you can't redefine "actor" to mean "actor in a movie".

otherwise, you don't have to augment the context with anything else. "https://w3id.org/security#publicKey" is a valid property name. the proposal is to not augment the normative context where possible. no parsing context if there is no context

-

@evan@cosocial.ca @cwebber@social.coop @hongminhee@hollo.social @kopper@not-brain.d.on-t.work I feel like deferring activity resolution and publishing based on online status would only serve to create more reasons for your average person to feel that the fediverse is unstable- explaining the logistics of the herd problem to someone who doesn't know what a distributed system is is kinda difficult.

@julia you don't have to publish as soon as you receive it; you just have to publish before the user loads it.

If the pattern doesn't work for you right now, no problem. As Sharkey scales, I hope you remember it!

-

@evan @gugurumbe @cwebber @kopper @hongminhee only for terms defined in AS2, though?

if the activitystreams context is missing in an application/activity+json document, then you MUST assume/inject it. this means you can't redefine "actor" to mean "actor in a movie".

otherwise, you don't have to augment the context with anything else. "https://w3id.org/security#publicKey" is a valid property name. the proposal is to not augment the normative context where possible. no parsing context if there is no context

@trwnh i was replying to a post that wanted all expanded terms.

-

@evan @gugurumbe it's infeasible to preload all contexts, pretty much every pleroma instance hosts their own context on their own instance for example. then there is the obvious interop problems of how to handle contexts for new extensions your software is not aware of (though pretending like they're empty might work i guess?)

@kopper @evan @gugurumbe i think you can treat context identifiers as aliases. if you are already in a situation where you generally have to inject corrected contexts, then this should be doable.

-

@evan @patmikemid @kopper @hongminhee Trust *then* verify?! That means accepting windows of impersonation attacks necessarily then, right...?!

@cwebber yes. Like I said, very low risk. If you want to be absolutely safe, wait until your first user reads the content before verifying it. It's usually not immediate. Most users aren't online. (TM)

@patmikemid @kopper @hongminhee -

@cwebber yes. Like I said, very low risk. If you want to be absolutely safe, wait until your first user reads the content before verifying it. It's usually not immediate. Most users aren't online. (TM)

@patmikemid @kopper @hongminhee@evan @patmikemid @kopper @hongminhee I would consider myself a user which, when at her computer, is in a state we might call "terminally online"

-

@evan @patmikemid @kopper @hongminhee I would consider myself a user which, when at her computer, is in a state we might call "terminally online"

@cwebber lucky you, you get all the first deliveries!

-

@evan @gugurumbe

here is a backwards incompatible change in a fep you authored: codeberg.org/fediverse/fep/src/branch/main/fep/b2b8/fep-b2b8.md#attributedto (specifically, the Link-and-name bit. mobile Firefox does not let me send highlights apparently)

the http signature draft->rfc change is backwards incompatible.

mastodon api to c2s is backwards incompatible for client developers (and, if done correctly, would require long and unwieldy migrations on servers. ask firefish.social users how those kinds of migrations end up)

whatever the replacement for as:summary as content warnings would be backwards incompatible. replacing as:name with as:description for media alt text is backwards incompatible (gotosocial did it, and we adapted)

making webfinger optional is backwards incompatible

backwards compatibility is not here yet. now is the second best time to get rid of legacy cruft@kopper @evan @gugurumbe that, and honestly a netsplit is probably the least of our concerns on this network; after all, we've been through this before with the migration from ostatus, and netsplits practically happen daily via defeds

-

@cwebber lucky you, you get all the first deliveries!

@evan @cwebber @patmikemid @kopper @hongminhee *sheepishly raises hand* why not standardize what everyone ended up doing instead since that seems to be faster *ducks*

-

@evan @cwebber @patmikemid @kopper @hongminhee *sheepishly raises hand* why not standardize what everyone ended up doing instead since that seems to be faster *ducks*

@aeva the thundering herd?

-

@evan @kopper @hongminhee The problem is that signing json-ld is extremely hard, because effectively you have to turn to the RDF graph normalization algorithm, which has extremely expensive compute times. The lack of signatures means that when I boost peoples' posts, it takes down their instance, since effectively *every* distributed post on the network doesn't actually get accepted as-is, users dial-back to check its contents.

Which, at that point, we might as well not distribute the contents at all when we post to inboxes! We could just publish with the object of the activity being the object's id uri

@cwebber @evan @kopper @hongminhee

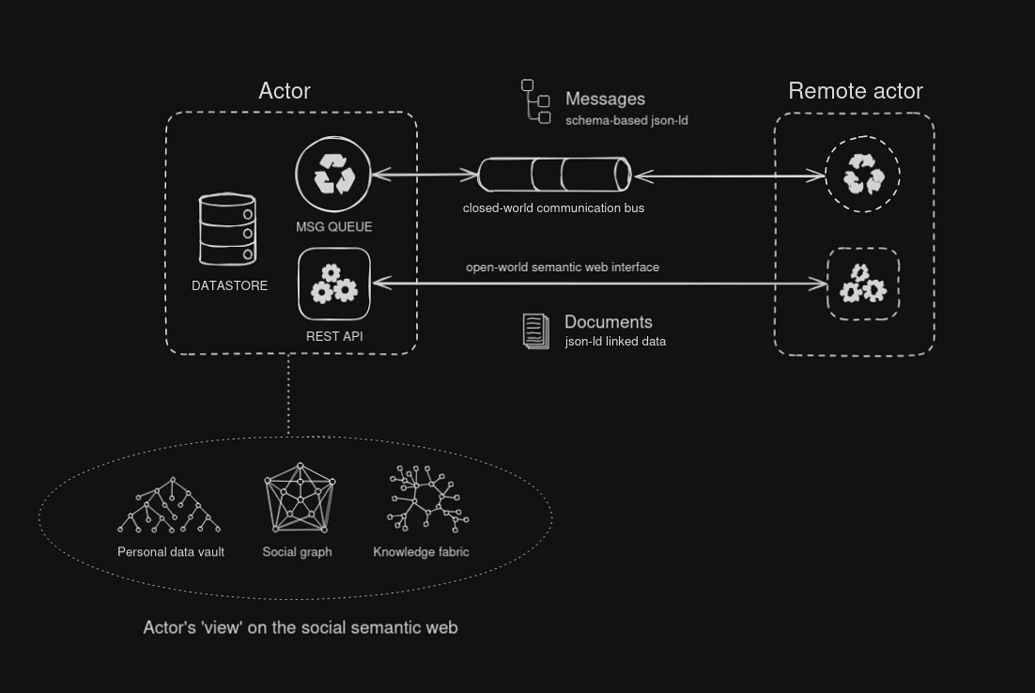

I may be naive and am not an expert here, but in my musings on a protosocial AP extension I imagined a clean separation of "message bus" where you'd want closed-world predictable msg formats defined by some schema (perhaps JSON Schema or LinkML). These msgs would JSON-LD formatted but validated as plain JSON.

And then there would be the linked data side of the equation, where a semantic web is shaping up that is parsed with the whole set of open standards that exists here, but separate of the message bus. This is then a hypermedia, HTTP web-as-intended side. Open world and follow your nose, for those who want that, or minimum profile for the JSON-only folks.

It occurs to me these require separate/different extension mechanisms, guidelines and best-practices. The linked data part lends itself well for content and knowledge presentation, media publishing. While the msg bus gives me event driven architecture and modeling business logic / msg exchange.

-

@cwebber @evan @kopper @hongminhee

I may be naive and am not an expert here, but in my musings on a protosocial AP extension I imagined a clean separation of "message bus" where you'd want closed-world predictable msg formats defined by some schema (perhaps JSON Schema or LinkML). These msgs would JSON-LD formatted but validated as plain JSON.

And then there would be the linked data side of the equation, where a semantic web is shaping up that is parsed with the whole set of open standards that exists here, but separate of the message bus. This is then a hypermedia, HTTP web-as-intended side. Open world and follow your nose, for those who want that, or minimum profile for the JSON-only folks.

It occurs to me these require separate/different extension mechanisms, guidelines and best-practices. The linked data part lends itself well for content and knowledge presentation, media publishing. While the msg bus gives me event driven architecture and modeling business logic / msg exchange.

@cwebber @evan @kopper @hongminhee

See the diagram sketch in my other toot posted today:

🫧 socialcoding.. (@smallcircles@social.coop)

Attached: 1 image @julian@activitypub.space @evan@cosocial.ca Btw, some time ago in a matrix discussion I sketched how I'd like to conceptually 'see' the social network. Not Mastodon-compliant per se (though it might be via a Profile or Bridge) but back to "promised land". Where the protocol is expressed in familiar architecture patterns and borrows concepts from message queuing, actor model, event-driven architecture, etc. Then as a "Solution designer" I am a stakeholder that wants to be completely shielded from all that jazz. That should all be encapsulated by the protocol libraries and SDK's that are offered in language variants across the ecosystem. #ActivityPub et al is a black box. I can directly start modeling what should be exchanged on the bus, and I can apply domain driven design here. And if I have a semantic web part of my app I'd use linked data modeling best-practices. I would have power tools like #EventCatalog and methods like #EventModeling. https://www.eventcatalog.dev/features/visualization https://eventmodeling.org/

social.coop (social.coop)

Protosocial would further prescribe how an AsyncAPI definition can be obtained from an actor, which defines the service it provides i.e. msg formats and msg exchanges. AsyncAPI might need to be extended to adequately model things.

-

@evan @gugurumbe

here is a backwards incompatible change in a fep you authored: codeberg.org/fediverse/fep/src/branch/main/fep/b2b8/fep-b2b8.md#attributedto (specifically, the Link-and-name bit. mobile Firefox does not let me send highlights apparently)

the http signature draft->rfc change is backwards incompatible.

mastodon api to c2s is backwards incompatible for client developers (and, if done correctly, would require long and unwieldy migrations on servers. ask firefish.social users how those kinds of migrations end up)

whatever the replacement for as:summary as content warnings would be backwards incompatible. replacing as:name with as:description for media alt text is backwards incompatible (gotosocial did it, and we adapted)

making webfinger optional is backwards incompatible

backwards compatibility is not here yet. now is the second best time to get rid of legacy cruft@kopper @gugurumbe sorry, I don't understand your point.

`attributedTo` has always had a range of `Object` or `Link`. The `Link` type has always had a `name` property.

I agree, HTTP Signature suuucks for us. I think the best we can do is double-knock and cache the results. I don't think that process has even started that much.